Guide 4.15.1 - 30 Nov 17

Wotja 4 is now retired. Get the latest version of Wotja!

Wotja 4 User Guide

Guide 4.15.1 - 30 Nov 17 |

Archived version

Wotja keeps evolving: check out the latest version!

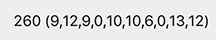

Generative Music Creator & Mixer

Create ideas for music, melodies, MIDI & lyrics... or make & playlist beautiful ambient generative music mixes for sleep & relaxation. Wotja is the consolidation and evolution of Noatikl, Mixtikl, Liptikl, Tiklbox & SSEYO Koan.

Users say of this powerful & unique creative relaxation system: "hands down the best generative software that I have ever used", "Brilliant", "Extremely musical", "Deep and professional", "best MIDI composition tool", "Love Wotja!", "These guys are ***THE*** pioneers along with Eno in generative music".

There's lots to explore, but you don't need to be a musician or expert to use Wotja. Anyone inquisitive can learn to master its power; see our helpful video tutorials.

Subscription and non-subscription versions available.

Platforms: iOS, macOS

"Creative Relaxation"

With Wotja, generate a live stream of music or create a stimulating cut-up and enjoy it as you would enjoy freshly brewed coffee - there's nothing quite like it!

Uses

- Create & record your own custom, high quality, license/royalty free background music for videos, CDs etc.

- Playlist live background music for meditation, sleep, relaxation, yoga, art, installations, small shops etc.

- Create "reflective music" melodies using "Text-to-Music"; use text in ANY language and/or emoji

- Experiment with sound design, generative music composition, MIDI input interactivity etc.

- Drive other MIDI synths via MIDI Out

- Use the 'Cut-Up' Technique to help break writer's block; discover new ideas for lyrics, songs, poems, haiku, stories etc.

- Play webpage embedded Wotja URLs (Wotja files, Wotja Box files) with Wotja for macOS and its Safari App Extension

- Open Noatikl, Mixtikl & Liptikl files

Note: Capabilities are iOS/macOS version dependent.

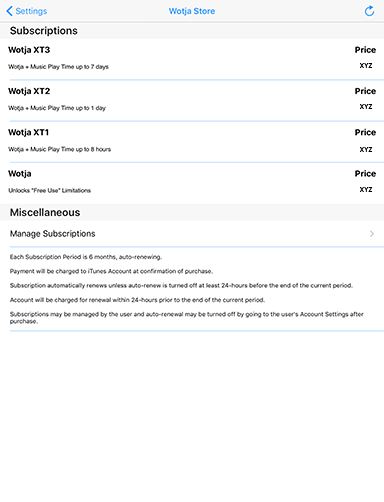

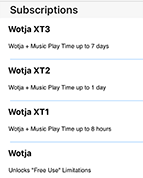

Wotja 4 Versions and In-App Subscriptions

Wotja 4 is available in two versions: a free subscription version ("Wotja") and a paid for non-subscription "annual" version (e.g. "Wotja 17" / "Wotja 2017).

"Wotja" (subscription version)

- A powerful FREE app with an In-App Store. It runs in "Free Mode" until purchase of an In-App Subscription, i.e. "Wotja Unlocked" or one of "Wotja XT" tiers. On lapse of the In-App Subscription Wotja reverts to the "Free Mode" of operation.

- Extending the Music Play Time: Wotja has a maximum 1 hour continuous "Music Play Time". When this is reached a mix will stop playing if it has not already done so and a simple manual restart can start it playing again. For specialist use cases where there is a need for a longer Music Play Time to generate continuous live background music e.g. for art installations, a small shop etc., the subscription version allows it to be extended by the purchase of specialist "Wotja XT" tiers of In-App Subscription, several being available. See: FAQ on Wotja XT Extensions.

- For the benefits of In-App Subscriptions read this FAQ entry.

- We also make available a 3 Day Free Trial.

"Wotja 2017"* (paid for non-subscription "annual" version)

-

Identified by year (e.g. 2017 [or abbreviation e.g 17]), the paid for non-subscription "annual" version of Wotja is updated in tandem with Wotja during that year only. It has the same capabilities as Wotja with an active "Wotja Unlocked" In-App Subscription. The annual version has no In-App Store or In-App Subscriptions.

Important: At the end of the year in question the annual version will be removed from sale and no further updates will be made available for it. It will likely continue to work just fine if you subsequently update the operating system on your device but if you find you require further updates, perhaps because of an operating system or 3rd party component update, or if you want to get and use the cool new features, content and capabilities as Wotja evolves, then simply purchase a later annual version (e.g. Wotja 2018). Alternatively, get the subscription version of Wotja and purchase one of the In-App Subscription tiers. These are: "Wotja Unlocked" or, for extended Music Play Times, a range of "Wotja XT" tiers. See FAQ on subscriptions and their benefits.

* By quirk of fate, the 2017 paid for Wotja for iOS i.e. "Wotja 2017" (also previously referred to as "Wotja Pro 2017") has the more capable "Wotja XT1" tier enabled which is more than the "Wotja Unlocked" tier. That will be addressed in the Wotja 2018 release! :)

"Free Mode" & Limitations

So there is always a way that Wotja can be used for FREE we include in the free subscription version a "Free Mode" of operation. It does have certain "Limitations" (see below), but simply purchase ANY In-App Subscription to unlock them.

On lapse of any In-App Subscription Wotja simply reverts to operating in "Free Mode" - it's as simple as that!

Note: For the subscription version we also provide a one time only fully functional 3 Day Free Trial (see the link for details).

The "Free Mode" Limitations are as follows:

- Mix:

- Save/Export - Free Mode: Mix Cell 1

- Music Play Time - Free Mode: 1 minute

- MIDI Out/In - Free Mode: Channel 1

- Mixdown Recording (Audio/MIDI) - Free Mode: Disabled

- Cut-Up:

- Save - Free Mode: Data Not saved (e.g. Cut-Up, Saved Cut-Ups, Sources, Rules)

- Text Export - Free Mode: Limited to 100 characters

- Cut-up Text Editing - Free Mode: Disabled

- Syllables in Rules - Free Mode: Limited to 5 uses per hour

Getting Started

Wotja is a deep app and that is reflected in this detailed User Guide. The iOS and macOS versions have largely the same features with interfaces that are geared to the underlying operating system. If you know how to use one, then you know how to use the other and vice versa. Because of that, and because Wotja for iOS came first, this User Guide is presently based around the iOS interface.

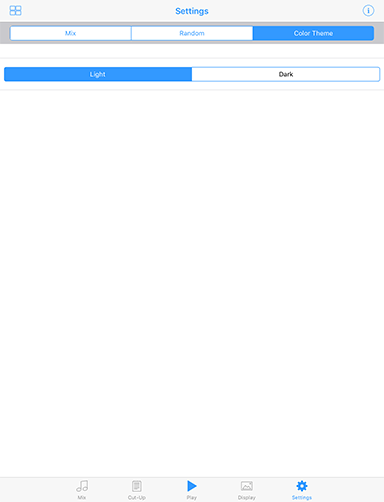

Note: Throughout this User Guide we use screengrabs for the Light UI Theme (this is the only theme presently available in Wotja for macOS).

Check out the Wotja: Quick Start (4 min) video or just start out by creating an auto-randomised Music Mix and explore from there:

Wotja: Quick Start (4m)

Please let us know if you think anything is wrong or missing!

Menu (macOS)

See the Video: Wotja iOS: Quick Start (4m) [the same principles apply on macOS]

The Wotja Menu on macOS is where you can:

- Create a new .wotja file or .wotjalist file

- Open a file you have previously saved

- Open any of the supported filetypes (see table below)

- Access to the Album Player to play the Built-In Albums

Tip: Use Finder to carry out normal file related activities e.g. delete/duplicate/rename/sort etc.

Note: You may want to use iCloud rather than local storage (if you do use local storage we strongly recommend saving your files to the Intermorphic Folder). If you use iCloud your files will also appear in the Wotja iCloud Drive folder for all your devices, meaning you can easily sync your Wotja related files between devices that share the same iCloud account (this is also handy for the Wotja Safari App Extension for macOS). See the iCloud FAQ.

WOTJA BACKUPS: Make these by: A) Using iCloud to store your files, or by B) backing up your device with Time Machine or equivalent; or C) emailing yourself a backup copy of each wotja. Using iCloud is the easiest way to manage your files.

| UI Item | Description |

Menu Bar |

|

Wotja Menu [macOS] Wotja Menu [macOS] |

Wotja for macOS Menu items

|

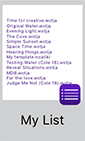

Files (iOS)

See the Video: Wotja iOS: Quick Start (4m)

If you launch Wotja on iOS the Files screen is the first screen you see. It displays the openable files (see below) that are in the Wotja iCloud folder or present in the Local Wotja app folder on the device (see iCloud/Local below).

The Files screen is where you can:

- Create a new .wotja file or .wotjalist file

- Open a file you have previously saved

- Open any of the supported filetypes

- Delete/duplicate/rename/sort files

- Access to the Album Player to play the Built-In Albums

Filetypes:

- The files that Wotja can open directly are: .wotja, .wotjalist, .wotjabox, .noatikl, .mixtikl and .liptikl.

- Note: Wotja cannot directly open .zip, .sf2, .midi, .wav or .ogg files but these can be referenced via the relevant editor as follows:

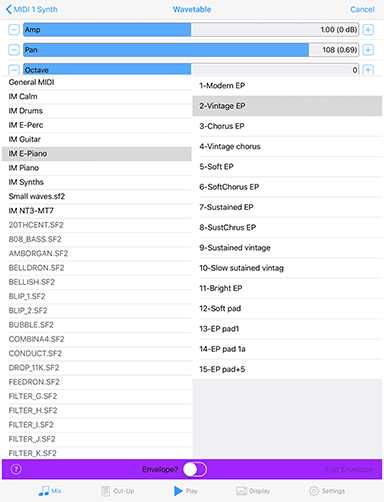

- .sf2: Wavetable Unit

- .wav, .ogg, .midi [in correctly formatted Paks]: Content Cell > Template List.

- See the FAQ: File Management - Where should I put or look for App Files, Zips, SF2, WAVs etc.?.

Tip: The thumbnail you see for each file in iOS varies according to the file type and whether your file uses TTM.

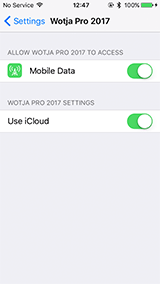

Tip: When you first start Wotja, you're prompted if you want to use iCloud or Local. If you change your mind, go the iDevice's Settings screen, scroll down that and look for the version of Wotja that you have and go into that screen. You will see a "Use iCloud" toggle. If you turn that off your files are ONLY saved locally to your device. You should back them up regularly. See the File Management FAQ.

Note: You may want to use iCloud rather than local storage (i.e. saving your files to your iOS device). If you use iCloud your files will also appear in the Wotja iCloud Drive folder for all your devices, meaning you can easily sync your Wotja related files between devices that share the same iCloud account. See the iCloud FAQ.

WOTJA BACKUPS: Make these by: A) Using iCloud to store your files, or by B) backing up / synching your iOS device (note that a device restore will be needed to recover your wotjas); C) emailing yourself a backup copy of each wotja, or; D) if you have a desktop computer, use iTunes Apps File Sharing to copy your wotjas off your device and to save them to your computer. Using iCloud / iCloud Drive is the easiest way to manage your files, but if you do not want to use that and/or want to manually back up your files see the instructions on the Intermorphic website for File Management - Where should I put or look for App Files, Zips, SF2, WAVs etc.?.

| UI Item | Description |

Album [iOS] Album [iOS] |

The Album Player is only for playing the Built-In albums. |

Action [iOS] Action [iOS] |

Popup Menu: |

Settings Settings |

Go to Settings screen [macOS: Wotja > Window > Wotja Settings] |

Edit Edit |

First tap the jiggling file(s) to select the ones that you wish to perform an action on and then:

|

Create New Create New |

Select "Create New" to see the following popup menu [macOS: Wotja > File > New]:

|

Thumbnail Thumbnail |

Saved File: Tap on one of the Saved File document thumbnails to open a previously created file. A file's thumbnail will briefly jiggle when you close the file and return to the Files screen, making it easy to see what file you have just played or edited. How the thumbnail displays depends on a number of factors:

File Name: Tap on the File Name for a pop up dialog to rename the file. |

Thumbnail Thumbnail |

Wotja List: Wotja List files (.wotjalist) are played in the List Player. Wotja List files are indicated by a small purple list icon that overlays the bottom right of the thumbnail image. If the Play list includes an Album thumbnail then you will see that here, otherwise you will see a list of the included files. File List Name: Tap on the File Name for a pop up dialog to rename the Wotja List file. |

Thumbnail Thumbnail |

Wotja Box: Wotja Box files (.wotjabox) are played in and exported from the List Player. Wotja Box files are indicated by a small golden box icon that overlays the bottom right of the thumbnail image. If the Wotja Box includes an Album thumbnail then you will see that here, otherwise you will see a list of the included files. File List Name: Tap on the File Name for a pop up dialog to rename the Wotja Box file. |

Template Template |

Template: A template file is shown with a small IME overlay in the bottom right corner. These are presently .noatikl files, but we may later change the extension. You can save the generative content in a cell as a template from the bottom toolbar Toolbox icon and selecting "Save as File". Once saved, you can find these files in the Template list under "Saved Files". |

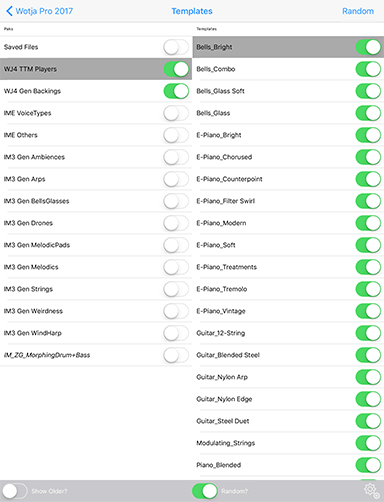

Templates

See the Video: Wotja iOS: Template List (5m)

The Template List is covered at the beginning of this User Guide because Wotja's use of templates is fundamental to how it works.

Templates are self contained "generative music and sound presets" - they can vary from being simple to very complex complex generators of notes and sounds.

Using randomisation techniques Wotja lets you mix templates together to very quickly create interesting music mixes. Once added to a mix you can edit/customize any of their settings, but you do not have to - they sound great as they are!

The Templates screen is where you preview and select templates that you want to base a new mix on (when creating a new Manual mix) or where in Mix mode you add a template to a Content Cell.

This screen can be accessed from:

- Files > Create New > Music Mix (manual)

- Settings > Random > Templates for Randomization

- Mix mode > Randomize button (dice) > Randomize Mix - Manual

On the left of the top panel is a button that takes you back to the screen you came from. Depending on how you accessed this screen, the top right menu items will vary (see below).

Tip: If you tap on a template or file in the left hand panel you can preview it without having to open it.

Tip: We include a number of cool Demo Pieces for you to to check out. Turn off the Random? toggle to see the "Demo Mixes" pak at the bottom of the Paks list. Some of the Demo Mixes require installation of one of the Free add-on Content Paks.

| UI Item | Description / Menu Items |

|

Back to the screen you came from. |

Random Random(Top right menu) |

When creating new Manual Mix:

|

Show Older? Show Older?(Left) |

Toggle this on to see older templates that were included in Noatikl 2 and Mixtikl 6. |

Random? Random?(Right) |

Toggle this on to see all the Pak and content toggles and the top "Random" menu option (only when creating a new Manual Mix). These toggles give you fine control over what Paks and content can be selected when you select the Random menu item on top right. Tip: Turn this off to be able to see the "Demo Pieces" Pak at the bottom of the Pak list. |

Random Settings Random Settings |

Shown only when Random? toggle is on. Takes you to the Randomization Settings screen. |

Paks Paks(left list) |

The list of Paks you can select a Template from. Saved Files: Always shown at the top for easy accesss, this is list of wotjas or generative templates that you have already created and saved. WJ4 Paks: These include templates optimised for Wotja 4. IME VoiceTypes: Simple templates that let you start a cell with just one VoiceType. IME Others: Older sundry templates. IM3 Paks: The Paks that were available for Noatikl 3 and Mixtikl 7 IM2 Paks: (Shown in dark grey, and only if "Show Old?" toggle is on). These include generative templates that came with Noatikl 2 and Mixtikl 6. IM1 Paks: (Shown in dark grey, and only if "Show Old?" toggle is on). These include generative templates that came with Noatikl 1 and Mixtikl 1-5. 3rd Party Paks: If you have them installed, Wotja Paks (e.g. MDB or the AL Collections) are shown in dark grey, italic. Other third party paks are shown in black, italic. Demo Files: Have a listen! Tip: To be able to see this Pak, turn off the "Random?" toggle. |

Templates Templates(right list) |

The list of Templates in the selected Pak. Tap to preview a Template; tap again to stop the preview. |

Editing Modes

See: "Free Mode" & Limitations

See the Video: Wotja iOS: Quick Start (4m)

Wotja is a very powerful creativity tool for creating and sharing generative music mixes and cut-up ideas.

The Wotja mix files it can save contain everything Wotja needs to play and display what you have created (excepting any locally referenced items, e.g. .zip, .sf2, .wav, .ogg, .midi files; these are not saved to mix files).

This means a Wotja mix file contains generative music parameters, sound network configurations and settings, text and emoji, display settings and even a background image - what is saved to the file depends on whether you are using Free Mode or not and what you have added to it.

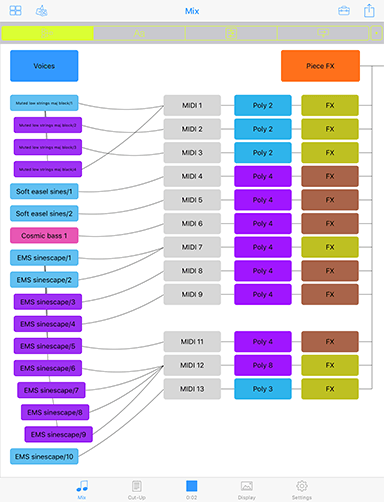

In order to edit the different kinds of parameters/settings that are stored in the file we have three distinct editing modes in Wotja. These modes are refered to as "Mix", "Cut-Up" and "Design". The parameters/settings used in each of these modes are saved to the Wotja file.

Each of these three modes is accessed via a "tab" on the bottom tab bar in Wotja, which is slightly different for the iOS and macOS versions, as shown below.

Editing Modes - iOS

Mix | Cut-Up | Play/Stop | Display | Settings

Editing Modes - macOS

Editing Modes

-

Mix: Where you "design" your wotja, meaning accessing parameters for the Intermorphic Music Engine (IME) and Intermorphic Synth Engine (ISE) to define how the music is generated and what sounds are used, adding TTM (text), mixing voices and templates and setting cell rules for arrangement.

Note: Displayed via the top toolbar segment control, the Mix mode features 5 different right hand panel views of data (two of the panels are accessed by one of the segments). Each of these provides access to different controls that let you set up much of how your wotja works.

- Cut-Up: Where you have access to a powerful cut-up text editor to stimulate ideas for creative writing.

- Display: Where you decide how you want your wotja to look, this being important should you wish to share it.

Common Controls

In addition, and so they are accessible throughout the app, we include two other controls (access is OS dependent):

- Play/Stop button: [iOS: bottom toolbar; macOS: top toolbar] Tap to stop or start your the generative mix (if any) in your wotja. On iOS there is an indicator beneath the button that shows how long your mix has been playing for; on macOS this is to the right of the button.

- Settings: [iOS: bottom toolbar; macOS: Wotja > Window > Wotja Settings] Takes you to the Settings screen where you can change Mix and Randomisation related settings (including Template selection).

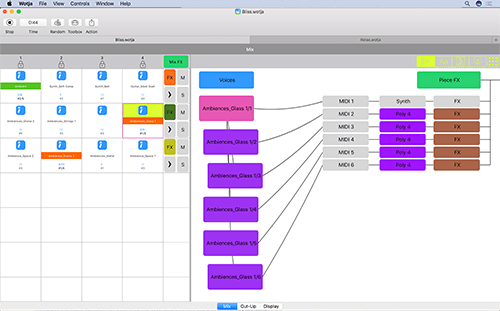

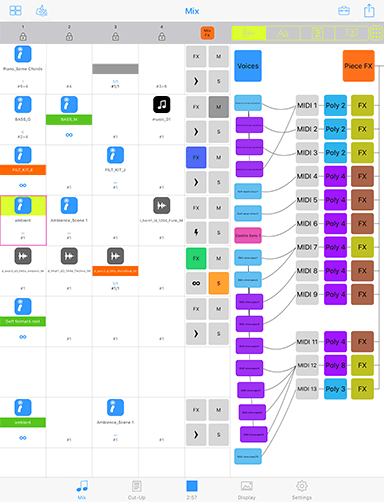

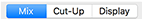

Mix Mode: UI Overview

See: "Free Mode" & Limitations

See the Video: Wotja iOS: Quick Start (4m)

This section gives you a quick overview of the interface related to creation of Wotja mixes in the Mix Mode, accessed via the bottom Mix Tab.

The Mix Mode has the most involved UI in Wotja as it concerns the mixing and editing of generative music. Both iOS and macOS versions have largely the same authoring feature set with native interfaces and the same way of working. If you know how to use one, then you know how to use the other and vice versa.

Note for macOS: There is a draggable middle splitter bar that lets you dynamically resize the size of left and right hand panels to suit your preference. In macOS 10.11, if the RHS network panel is resized (and the same for some others), it does not dynamically refresh.

Right Hand Panel Display

Use the top toolbar 4 way segment control to show either the Network, TTM, Mixer or Rules data in the Wotja mix file.

- Network Panel

- TTM Panel

- Mixer Panels

- Rules Panel

Left Hand Cells/Grid Display

Use the top toolbar button to right of the Panel Display segment control for a pop-up menu to select between Multi Column, Single Column or Single Cell display modes.

See the Central Area of the table below for detailed information on the Wotja Content Cells.

| UI Item | Description |

Menu Bar |

|

Save/Back to Files [iOS] Save/Back to Files [iOS] |

Save / Back to Files See: "Free Mode" & Limitations Displays the Save Changes popup (see below) before exiting to the Files Screen.

|

Randomize Mix Randomize Mix |

|

Toolbox |

See: "Free Mode" & Limitations Tap the Toolbox button to pop up a menu where you can select from a number of useful actions. The button will take on a fuschia colour when you have selected a sticky action. Tap the button again to turn off sticky behaviour.

|

Action Action |

See: "Free Mode" & Limitations

|

Top Toolbar |

|

Column Lock Column Lock |

Tap on this button to lock a column to force the play of all cells in that column; tap again to unlock it. Locking of a column only has effect on cells for a Track with Track Rule of type "Sequence" and where cells are not looped (i.e. no orange band). In single column view, the column number shown at the top is that of the selected Cell. |

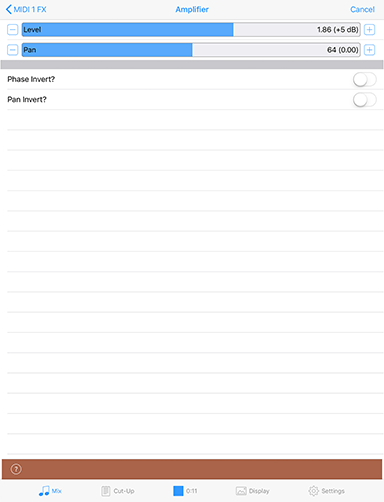

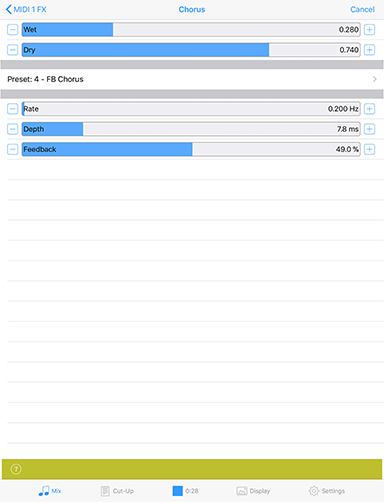

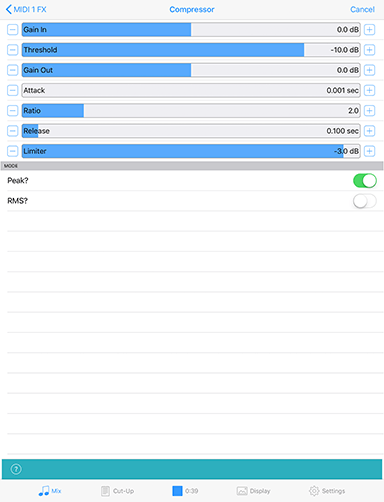

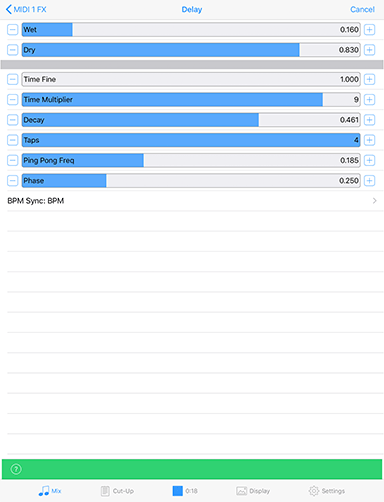

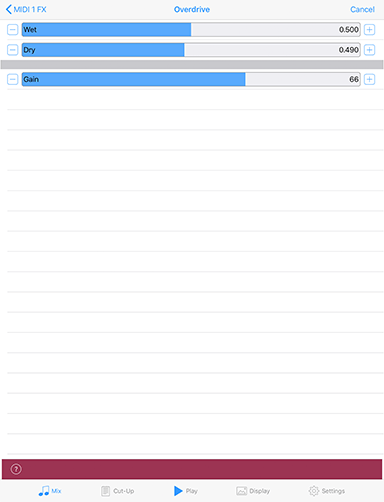

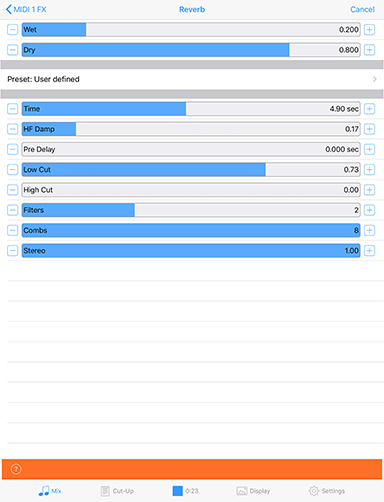

Mix FX Mix FX |

This button indicates if there is any Mix level FX applied. When there is no FX applied it is a dull blue colour. Tap the button to go the the ISE FX Network Editor where you can create or edit a FX network. |

TTM TTM |

TTM Panel: For a selected Cell that contains generative content the right hand panel shows a TTM text field for every voice in a cell. Only Fixed Pattern voices (pink) can play TTM, and the Enable toggle will change a voice to that when turned on. |

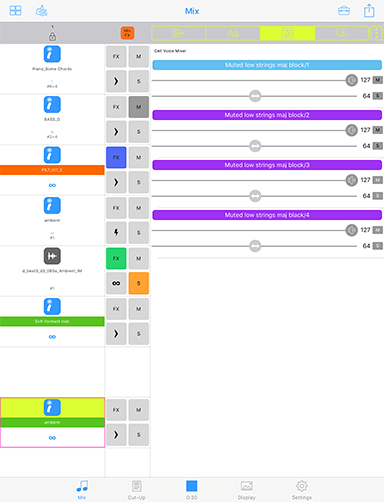

Track Mixer (Default) Track Mixer (Default) Voice Mixer Voice Mixer |

Mixer Panel: Once in this panel, tap the button again for a pop-up menu where you can choose between seeing the Voice Mixer and Track Mixer panels.

|

Cell Rules Cell Rules |

Cell Rules Panel: Right hand panel shows the paramater values for the selected Cell that determine things such as how long it will play for. |

Network Network |

Network Panel: For a selected Cell that contains generative content the right hand panel shows the cell's generator network. Tap on the buttons in the network to have quick access to the relevant Voice parameters for the Intermorphic Music Engine (IME), right through to accessing the Intermorphic Synth Engine (ISE) to carry out any sound design you wish to do. |

Mix Display Mode button Mix Display Mode button |

A mix always includes a "grid" of 12 tracks of 4 cells. You can populate or leave empty any cells you wish. This button, shown to the right of the panel selectors, has a pop-up menu allowing you to select one of three display modes for the grid:

|

Central Area - Content Cells |

|

Content Cell Content Cell |

Content Cells are at the heart of Wotja. You can use the bottom toolbar right side "grid" icon to toggle the left hand Content Cell display to show either 1 column of Cells or 4 columns of Cells. There are 3 areas/parts of cell, see below. |

Selected Selected Generative Generative  Audio Loop Audio Loop MIDI File MIDI File |

Selected Cell: When a Content Cell is selected the border of the cell takes on a fuschia colour and the top or bottom of the cell takes on a lime green background colour that marries up to the right side panel that has been selected to display. The top of a Content Cell also displays an icon depending on the kind of content in it:

|

Cell Status: Playing Playing Looping Looping Play Empty Play Empty |

The middle of a Content Cell displays the Cell Status band and within that is the name of the file in the Cell. The colour of this band indicates the following:

|

Cell Values: Playing Playing Stopped Stopped Infinite Infinite Missing Content Missing Content |

The bottom of a Content Cell shows the cell "Arrange" values, and these are presented differently depending on whether the mix is playing or stopped. The topmost values shown in blue are the Cell's Generative Bar values and the values below those in black or dark grey are the Cell's Cell Repeat values.

|

Central Area - Track Controls |

|

Track FX Track FX |

Default - None: Tap this button to go into the ISE Network Editor where you can add Track FX. |

Track Rules: Sequence Sequence One Shot One Shot  Loop Loop |

Default - Sequence: Sequence: When the content in the Track's current cell has finished playing, the next Cell in the track will play (unless it has been forced to loop). One Shot: No Track Cell will play UNTIL it has been triggered (it will display the green "play marker" in the middle of the cell). Once triggered, any content will play through ONCE (like a sting) and then the cell will stop playing. Loop: No Track Cell will play UNLESS it is forced to loop - either by tap/hold on the cell or by setting the Loop toggle in this panel. Turn off looping the same way. Tip: When using the Toolbox menu "Randomize" menu functions only the item "Randomize Cell (sticky)" will randomize the Cell. Advanced Tip: Cells in Loop tracks containing Audio/MIDI content will play ONCE when the Column lock is first applied to a Column (if a cell is not already looping); cells containing generative content will be set to play for the length of the mix (∞). |

Track Solo Button Track Solo Button |

Tap to solo the related Track. Gold tint means soloed, grey means not soloed. This button is in the Track Control grouping to the left of the sliders. |

Track Mute Button Track Mute Button |

Tap to mute the related Track. Dark grey means soloed, grey means not soloed. This button is in the Track Control grouping to the left of the sliders. |

Central Area - Right Side Panel |

|

Generator Network Generator Network |

Voices Button: This is a shortcut for Voice Editor screen and this is the top left Voices button. You can still tap a Voice and select Edit from the pop-up menu to go to the Voice parameter screen for that voice. See also the Intermorphic Music Engine (IME) section of the guide for details on what the parameters do. What shows in this area depends on which panel you are in:

See in order:

|

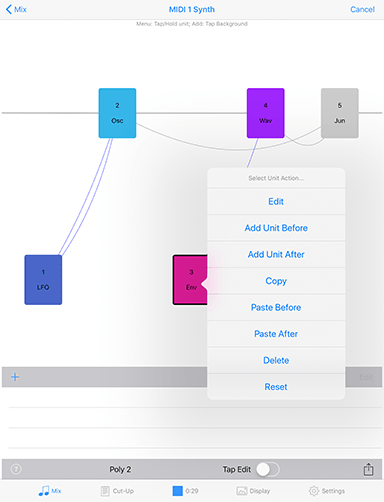

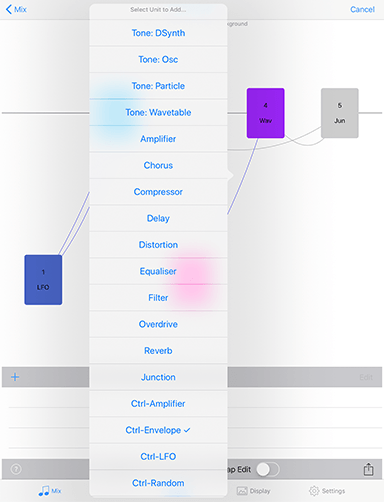

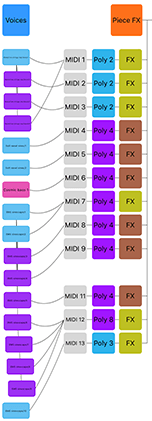

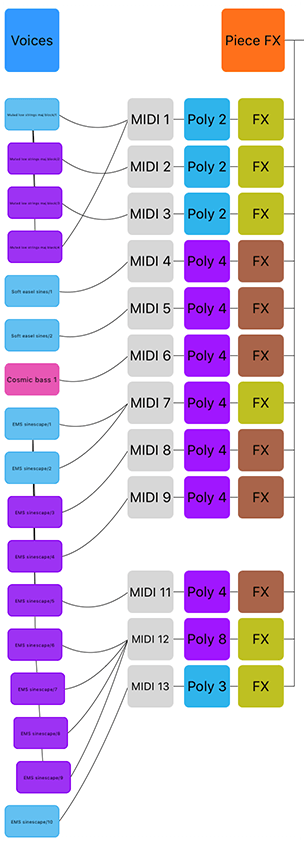

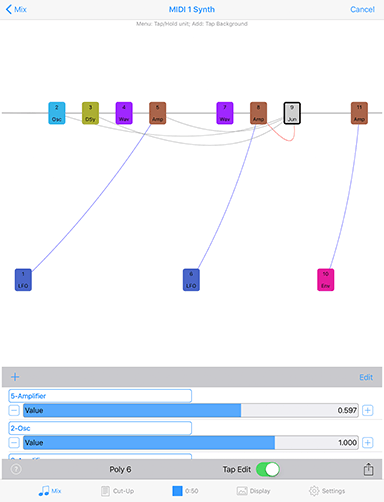

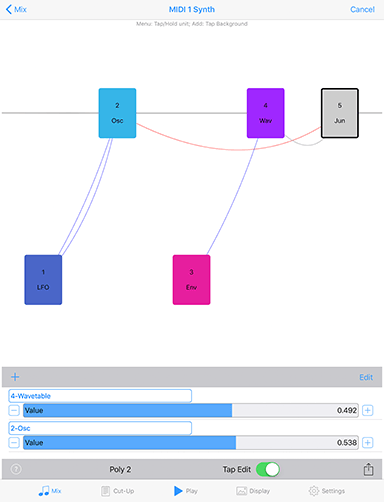

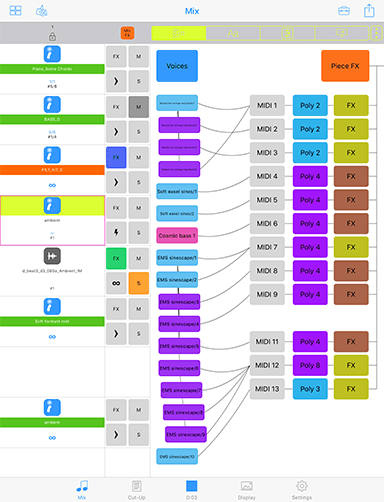

Mix Mode: Panel - Network

See the Video: Wotja iOS: Quick Start (4m)

The right side "Network" panel is where you can marry IME voices (note generators) to MIDI channels, ISE synth sounds and FX. The buttons in the network and associated ISE network all provide quick links to the underlying paramaters and editors.

Tap the top Voices button for quick access to the IME parameter editors. Go to the Cell Rules panel to set Cell and Mix level settings such as Cell Rules and Mix Tempo.

Tap/hold on a voice and you can drag it up/down to change the voice order. If you drag from a Voice button to a MIDI channel box that will tell the system that the Voice will play through the defined MIDI channel. If a new voice is added to a piece, the voice won't be assigned to a specific MIDI channel; if you haven't yet hooked-up a Voice to a MIDI channel, the IME will decide on a free MIDI channel to hook-up the Voice to when it starts playing.

For full information on the Intermorphic Music Engine (IME) parameters refer to the IME section of this guide.

For full information on the Intermorphic Sounds Engine (ISE) parameters refer to the ISE section of this guide.

| UI Item | Description |

Voices button Voices button |

Tap to go to the Voice Editor screen. See also the Intermorphic Music Engine (IME) section of the guide for details on what the parameters do. |

Piece FX Piece FX |

Tap set the piece level FX in the ISE FX editor. |

Voice button Voice button |

Tap on a voice to get a Voice Action pop-up menu below. To Paste a voice (once copied) you must tap an existing voice and then select Paste.

|

MIDI channel MIDI channel |

The Voice "IME Patch" parameter (see Voices screen) defines which MIDI Patch command to send for each MIDI channel. The MIDI channel box is an indicator and not a button. However, you can tap/hold a voice button and drag it to a MIDI channel box. In general, you should only hook-up multiple Voices to the same MIDI channel if they all share the same Patch parameter. MIDI Channel 10 is reserved for the Drum instrument. Every voice with a Drum Patch, should generally be attached to MIDI Channel 10. |

Synth button Synth button |

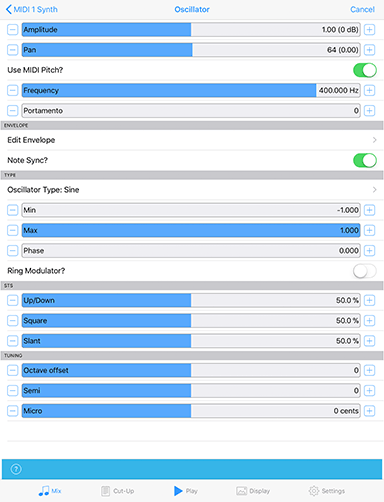

If the "ISE for Sounds & FX" toggle is checked in the Settings screen, then MIDI events are routed for each channel through a ISE Synth configuration defined by the associated Synth button, which by default will use ISE's built-in MIDI Wavetable synth (using the Patch defined by the Voice's Patch parameter). If you want to use a custom sound, click the Synth button to invoke the ISE Synth editor. |

Track FX button Track FX button |

The output from every Synth box can be passed through a custom FX Network. If you want to use an FX, click the FX button to invoke the ISE FX editor. |

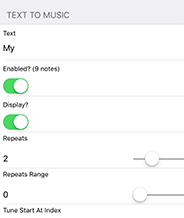

Mix Mode: Panel - TTM

See the Video: Wotja iOS: Quick Start (4m)

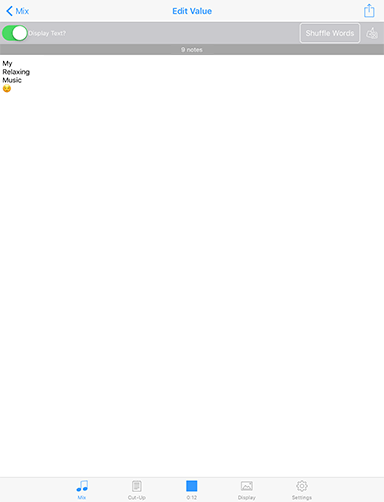

The "Text to Music" (TTM) panel is where you see which TTM players are active and it provides a quick route to adding text into them.

Tap on a coloured voice button to take you to that Voice's parameters in the Voice Tab.

Tap on the Text area to go into the Text Editor screen (see table below) where you can add your text and emoji.

You can use any text or symbols (including Emoji), and in can be in any language. In general it takes 2 characters to generate a note, but as a special case if only 1-3 characters or emoji are entered then Wotja will still generate a 3-4 note melody.

| UI Item | Description |

TTM Panel |

|

Voice button Voice button |

Tap to take you to the parameters for this voice in the Voices tab. In that screen tap the LHS "Text Pattern" item to scroll to all the TTM related parameters. |

Text Text |

This is your TTM text. Tap this to go to the Text Editor screen below. |

Enable toggle Enable toggle |

Turn this on to enable the TTM voice, meaning your text will be used to generate a pattern. If the voice type is not already Fixed Pattern turning on this toggle on will change it to one. The pattern generated by your text will then overide any pattern that may already be in the Fixed Pattern voice. |

Text Editor Screen

Accessed via the Mix Mode > TTM Panel > Voice TTM button or via the Mix Mode > Network Panel > Voices Button > Text to Music > Text parameter, this is the screen where you enter your TTM text and emoji.

Tip: Using the Randomise button in this screen is a quick and easy way to create some cut-up text!

Mix Mode: Panel - Voice Mixer

See the Video: Wotja iOS: Quick Start (4m)

Tip: To swap between Voice Mixer and Track Mixer panels, first select this segment in the top toolbar. Tap the segment again for a pop-up menu which allows you to change between them.

The right side "Voice Mixer" panel is where you can easily change the volume and pan balance of the Voices (generators) used within the cell.

Tip: To mix tracks against each other use the Track Mixer panel.

Tap on a slider to change the volume or pan.

Tap the Mute or Solo button to mute or solo the Voice. These buttons do not at present change colour like those in the Track Mix panel.

| UI Item | Description |

Voice Mix Panel |

|

Voice button Voice button |

Tap to take you to the Voice in the Voices tab so that you can edit its composition parameters. |

Volume Slider Volume Slider |

Tap to change the volume for the related Voice. Tip: This slider changes the volume value for the MIDI channel that this Voice is on. You may have multiple Voices all on one MIDI channel. In that instance, try changing the Voice's Velocity parameters instead. |

Pan Slider Pan Slider |

Tap to change the pan position for the related Voice. Tip: This slider changes the pan value for the MIDI channel that this Voice is on. You may have multiple Voices all on one MIDI channel. |

Voice Mute/Solo Buttons Voice Mute/Solo Buttons |

Tap to mute or solo the related Voice. Tip: The Voice button will turn light grey when it is muted. These buttons do not at present change colour like those in the Track Mix panel. |

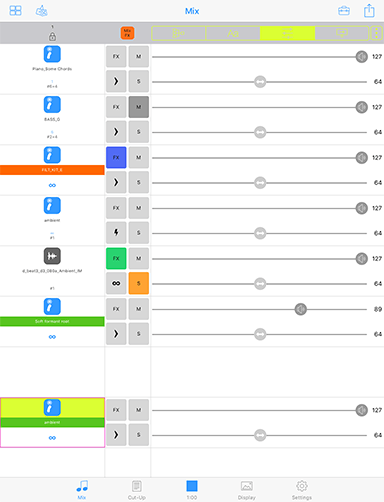

Mix Mode: Panel - Track Mixer

See the Video: Wotja iOS: Quick Start (4m)

Tip: To swap between Track Mixer and Voice Mixer panels, first select this segment in the top toolbar. Tap the segment again for a pop-up menu which allows you to change between them.

The right side "Track Mix" panel is where you can easily change the volume and pan balance of each of the tracks used in the mix.

Tip: To mix the internals of a cell use the Voice Mix panel.

Tap on a slider to change the volume or pan.

Tap the Mute or Solo button to mute or solo the Voice. These buttons change colour to indicate their status.

| UI Item | Description |

Track Mix Panel |

|

Volume Slider Volume Slider |

Tap to change the volume for the related Track. |

Pan Slider Pan Slider |

Tap to change the pan position for the related Track. |

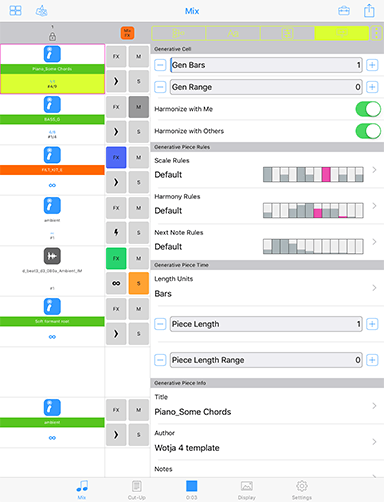

Mix Mode: Panel - Cell & Mix Rules

See the Video: Wotja iOS: Quick Start (4m)

The right side "Rules" panel is where, amongst other things, you can easily set the parameters that define how a Cell will play, as well as the rules used for the Piece in that Cell AND some general values that apply to the whole Mix (such as Tempo, Root, Ramp Up/Down as well as Playlist Duration settings, all shown at the bottom of the panel).

Tip: The values of some of these cell settings are shown overlaid on the relevant Cell.

Mix section: The Mix Tempo, Mix Root and Ramp Up/Down settings apply to both a mix and/or that mix in a PLaylist, but the Playlist Duration/Playlist Duration Range/Playlist Ramp Down are specific to that mix only in Playlists.

Important: We do not support Mix level rules that override those in all cells, but it is something we may consider later.

Tip: When exporting a piece for use as a template, it is best to use a maximum piece length (32,000 seconds).

Tip: If you are going to be making your mix or piece available for others to use, you may well wish to include your own copyright statement for each piece in the mix. Each voice within the piece can also have its own copyright statement.

For full information on the Intermorphic Music Engine (IME) parameters refer to the IME section of this guide.

| UI Item | Description |

Generative Cell |

|

| Gen Bars | Default: ∞ - The minimum number of bars for which a cell will play generative content. Special Value: If this value is set far left to ∞ then once play in a Track reaches such a cell the generative content in that cell will continue to play either for as long as the mix plays or until you manually select another cell in that Track to play. This value is the first of a pair of numbers shown in blue in the top line of text at the bottom of the cell. |

| Gen Bars Range | Default: 0 - A value that is added to the Gen Bars value to define the maximum number of bar for which a cell will play generative content.

This value is the second of a pair of numbers shown in blue in the top line of text at the bottom of the cell. If the first value (above) is ∞ then this value is not shown. |

| Harmonize with Me | Default - ON: This determines if Wotja needs to take the notes played by this Cell into account when composing/playing other Cells. |

| Harmonize with Others | Default - ON: This determines if Wotja needs to take the notes played by other Cells into account when composing/playing notes for this Cell. |

Generative Piece Rules |

|

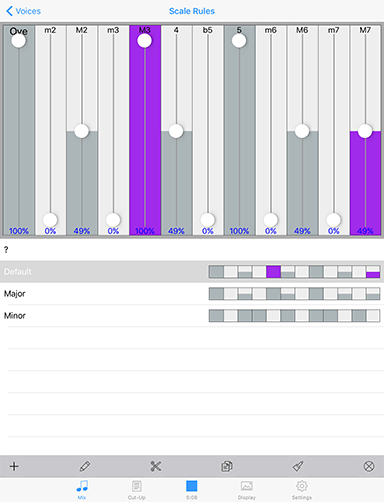

| Scale Rules | Default - Default Rule: When the cell is playing any active rule element will flash; the colour is that of the Voicetype of the playing voice. To edit or change the Piece Level Scale Rule (which can be overridden at Voice level), tap on it to go to the Rule Editor screen. For information on Scale Rules refer to IME Rule Object. For information on Piece Level rules refer to IME Piece Rules. |

| Harmony Rules | Default - Default Rule: When the cell is playing any active rule element will flash; the colour is that of the Voicetype of the playing voice. To edit or change the Piece Level Harmony Rule (which can be overridden at Voice level), tap on it to go to the Rule Editor screen. For information on Harmony Rules refer to IME Rule Object. For information on Piece Level rules refer to IME Piece Rules. |

| Next Note Rules | Default - Default Rule: When the cell is playing any active rule element will flash; the colour is that of the Voicetype of the playing voice. To edit or change the Piece Level Next Note Rule (which can be overridden at Voice level), tap on it to go to the Rule Editor screen. For information on Next Note Rules refer to IME Rule Object. For information on Piece Level rules refer to IME Piece Rules. |

Generative Piece Info |

|

| Title | Displays the Title of the Piece/Template, if it is defined. For information on Piece Title refer to IME File. |

| Author | Displays the Author of the Piece/Template, if it is defined. For information on Piece Author refer to IME File. |

| Notes | Displays any Notes in the Notes field the Piece/Template, if defined. For information on Piece Notes refer to IME File. |

Cell Rules |

|

| Cell Repeats | Default - 1: The minimum base value for the number of times a cell will repeat after it has played through once and to which the range value (below) is added. For a generative template each bar counts as one repeat; for audio or MIDI content this is the length of the content rounded up to the end of a bar). Note: If the cell contains generative content and the Generative Bar Min is value is set to ∞ then this setting takes no effect as the cell never repeats! This value is the first of a pair of numbers shown in black in the bottom line of text at the bottom of the cell. |

| Cell Repeats Range | Default - 0: Sets the range that applies to the above base value. This value is the second of a pair of numbers shown in black in the bottom line of text at the bottom of the cell. |

| Loop (Toggle) | Default - Off: Turn this on to force the Cell to loop continuously. Only once Cell per Track can be looped. Turning on Looping for another Cell will turn off Looping for this Cell. |

| Cell Root Offset: Mix Root + Semitones | Default - Template Root: Choose the Root you want to apply to the Cell. Its value is a Semitone offset from the Mix Root. |

Track Rule |

|

| Sequence/One Shot/Loop | Default - Sequence: Sequence: When the content in the Track's current cell has finished playing, the next Cell in the track will play (unless it has been forced to loop). One Shot: No Track Cell will play UNTIL it has been triggered (it will display the green "play marker" in the middle of the cell). Once triggered, any content will play through ONCE (like a sting) and then the cell will stop playing. Loop: No Track Cell will play UNLESS it is forced to loop - either by tap/hold on the cell or by setting the LoopToolbox menu "Randomize" menu functions only the item "Randomize Cell (sticky)" will randomize the Cell. Advanced Tip: Cells in Loop tracks containing Audio/MIDI content will play ONCE when the Column lock is first applied to a Column (if a cell is not already looping); cells containing generative content will be set to play for the length of the mix (∞). |

File Info |

|

| Name | File name: This displays the name of the audio/MIDI file or original template used in the Cell. |

| Path | Pak Name: This displays the name of the zip file that includes the template. |

| Duration | Content length (secs): This displays the play length of the content in the cell. |

Mix |

|

| Tempo: | Default - Template Tempo: Choose the Root you want to apply to the Cell. Its value is a Semitone offset from the Mix Root. |

| Ramp Up: Seconds | 0-20: This applies when the mix starts to play (also in a Playlist). |

| Mix Root: | Random root is chosen for an automatic mix or from the template if created manually: Choose the Root you want to apply to the whole Mix. |

| Playlist Duration: Seconds | 10-7200: This is how long the mix will play for in a Playlist (overridden by Music Play Time). Double tap for a pop-up menu where you can edit the value via on-screen keyboard. |

| Playlist Duration Range: Seconds | 0-7200: A range value added to the Playlist Duration value (overridden by Music Play Time). Double tap for a pop-up menu where you can edit the value via on-screen keyboard. |

| Ramp Down: Seconds | 0-20: This applies when the mix stops playing in a Playlist. |

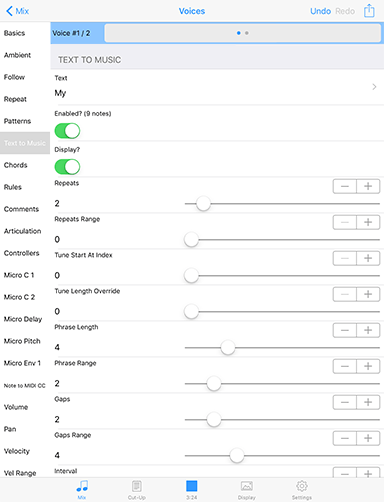

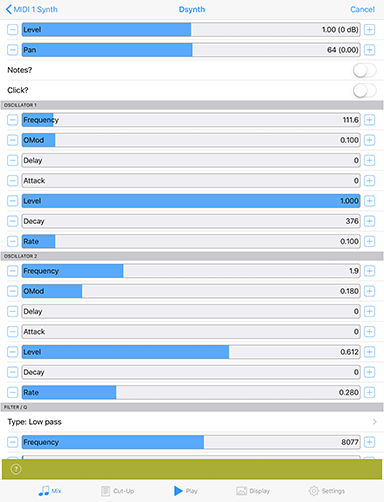

Mix Mode: ParamParameter Editor - Voices

The Voices screen is where you edit all the parameter values for each of the voices in the piece in the cell. You get to it from the Network panel by tapping on a Voice button and selecting Edit from the pop-up menu.

Simply select the Parameter Group on the left hand side, and then edit the parameters on the right.

For full information on the Intermorphic Music Engine (IME) parameters refer to the IME section of this guide.

| UI Item | Description |

| Undo/Redo | Undo or Redo your last parameter change. |

Central area |

|

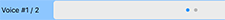

Voice Selector Bar Voice Selector Bar |

The Voice selector bar shows the number of voices in the piece (in the selected cell). Tap in the left or right side of this bar to move to another. |

Parameter Group List Parameter Group List |

Tap the item you are interested in the left hand Parameter Group list for the right panel to scroll to that section. Tip: Tap "Basics" to scroll the Parameter Values panel to the top so you can see the Voice name. For full information on the Intermorphic Music Engine (IME) parameters refer to the IME section of this guide. |

Selected Parameter Group Selected Parameter Group |

The selected Parameter Group. |

Parameter Values Panel Parameter Values Panel |

The Parameter Values panel is where you change the values for parameters in the group. Swipe up / down in this panel to scroll up or down. For full information on the Intermorphic Music Engine (IME) parameters refer to the IME section of this guide. |

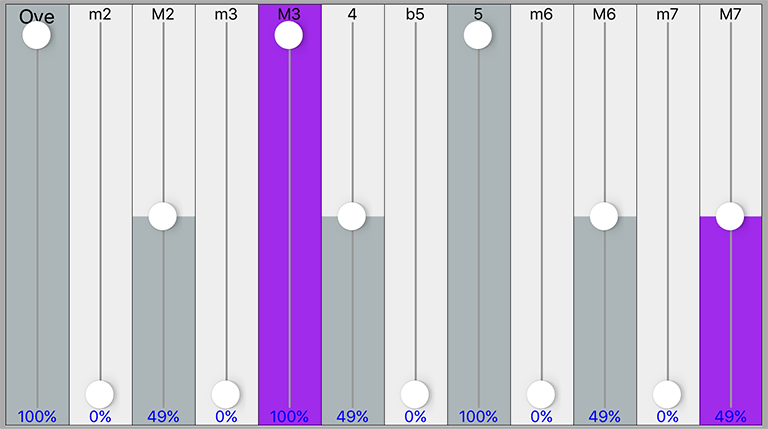

Mix Mode: ParamParameter Editor - Rule

This view is where you select and (real-time) edit the Rules used in your voice or piece - be they Scale, Harmony, Next Note or Rhythm rules.

| UI Item | Description |

Top area |

|

Rule Element Rule Element |

This control lets you change the rule elements. Tap on a rule element to change its value. The composition engine accomodates all rule changes in real-time - which can be a lot of fun! So that you can tell when a voice using the currently selected rule composes using it, it flashes the colour of the voice type used by that voice. This is primarily to remind you that other voices rely on that rule, so if you are going to change it then the way other voices compose may change, too. It can be useful to have the same rule used by all voices, but if you want to, then each voice can have its own rule! For full information on the Intermorphic Music Engine (IME) parameters refer to the IME section of this guide. |

List area |

|

Rules List Rules List |

Below the Rule Edit area is a scrollable list contains all of the defined rules. Tap a rule to select it so that you can edit it in the Rule Edit area above. |

Toolbar      |

The toolbar contains the Add Rule button, as well as a the Edit Rule Name, Cut, Copy and Paste buttons and the Delete button. When you select the Add Rule button you are presented with a set of included default rules. For Scale rules these may include Major, Minor, Dorian, Hypodorian, Hypolydian, Hypomixolydian, Hypophyrgian, Lydian, Mixolydian, Pentatonic, Phrygian. |

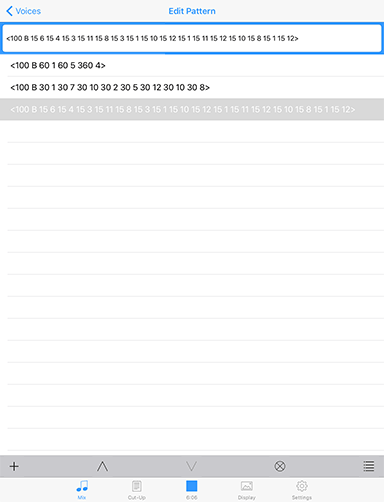

Mix Mode: ParamParameter Editor - Pattern

This view is where you edit all the Pattern parameters. Patterns are a little tricky to use because of limited string editing, so be aware. We suggest instead to use TTM Patterns.

| UI Item | Description |

Top area |

|

Pattern Edit field Pattern Edit field |

Tap in the field to edit the IME Pattern or Sub-pattern. The IME Pattern Syntax can take a bit of getting used to and you will need to study it carefully if you want to use patterns. |

List area |

|

Pattern List Pattern List |

Below the Pattern Edit field is is a scrollable list contains all of the defined patterns or sub-patterns. Tap a pattern to select it so that you can edit it in the Pattern Edit field above. |

Toolbar     |

The toolbar contains the Add Pattern button, as well as a the Up and Down buttons to move patterns up and down, the Delete pattern button and the Presets button. Select the Presets button display a list of preset included patterns to choose from. IMPORTANT: The pattern you select in the presets list OVERWRITES your current selected pattern. Tip: Patterns are a way that can be used to create hidden structure in a piece. Set up a voice to be of Voice Type Pattern, set its volume to be zero (e.g. in the Blend view), but do not mute it (or it will not count in terms of composition). Then, follow that Fixed Pattern voice with another voice. Set up the following voice with Chords Strategy set to Chordal Harmony and you will never hear the pattern but it will be used as an invisible skeleton around which to compose! If you want to hear the pattern, set Follows Strategy to Semitone Shift. |

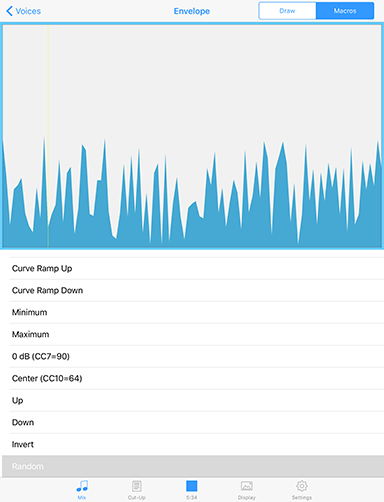

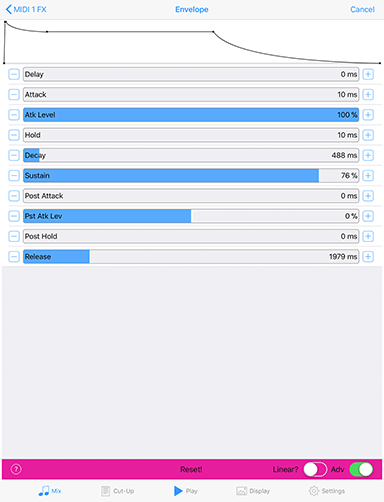

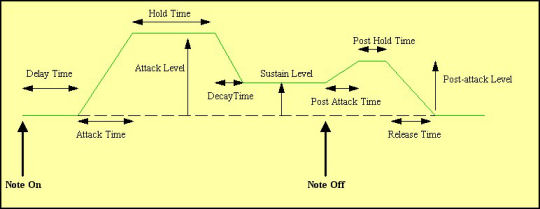

Mix Mode: ParamParameter Editor - Envelope

Voice Envelopes are supported for the following parameters:

- Volume

- Pan

- Velocity

- Velocity Range

- Velocity Change

- Velocity Change Range

Envelopes work in the same way for all of these. This view is where you edit the Voice Envelopes.

Each envelope is a collection of up to 100 data points. A piece starts with the value at the left side of the envelope, and as it progresses, eventually ends up with the value from the far right of the envelope.

| UI Item | Description |

Envelope Mode Envelope Mode |

Draw: Select this if yoi want to draw direct on to the envelope with your finger to change the values. Macro: Select this to use one of the various macro envelope editing tools. |

Envelope Editor Envelope Editor |

|

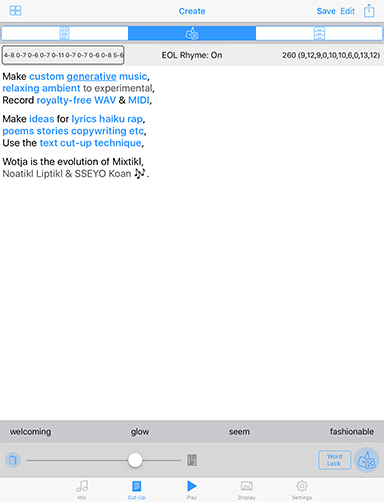

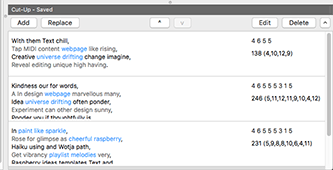

Cut-Up Mode: UI Overview

See: "Free Mode" & Limitations

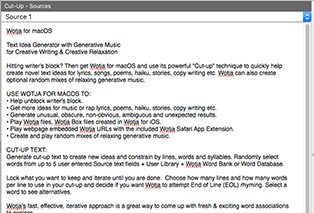

The powerful "cut-up technique" allows you to quickly generate ("Create") random word associations ("Cut-up") from a pool of words. This pool can include words from up to 5 user entered text fields ("Sources"), a user word library ("Word Library"), the customisable Wotja Word Bank ("Word Bank") and a non-editable Wotja Word Database ("Word Database").

You can lock down ("Lock") any words you want or, for just a selected word, choose one of the alternative cut-up words presented. You can use Rules ("Rules") to constrain your cut-up by lines, words and syllables.

Keep iterating until you find the interesting, inspiring or serendipitous combinations of words you are looking for. Save the the best Cut-ups ("Saved") and then use in real-world lyrics, poems, haikus, tweets etc.

For your cut-up you get to choose how many lines and how many words per line you want in it and whether you want Wotja to attempt End of Line (EOL) rhyming.

Tip: You can also also quickly generate cut-up in the Text Editor screen.

In Wotja for macOS there are 3 main panels in the display.

In Wotja for iOS these are selected by the control at the top (shown above):

- Sources: - Where you input and store all the text you want to be used as a source for your cut-up. The sources are saved in the your Wotja file and you can have up to 5 of them.

- Create: - Where you create your cut-ups. The created cut-up, locks and associated Rules are saved in your Wotja file.

- Saved: - Where you can see all the cut-ups you have chosen to save. These cut-ups are saved in your Wotja file.

Important: We respect copyright and strongly encourage you to do the same. That means if you use Wotja to come up with a lyric you like, make sure that it does not infringe the copyright of any of your source material. Wotja is pretty good at creating random "cut-ups", but it is just a tool for you to use to generate ideas. It is ultimately your responsibility to make sure you are not infringing anyone elses copyright.

Cut-Up Mode: Sources

The Sources screen is the first of the three Cut-Up related screens.

It is where you input and store all the text you want to be used as a source for your cut-up. The sources are saved in the your Wotja file and you can have up to 5 of them.

Each source field is plain text only and you can type the data in directly, or paste-in some text from the clipboard.

macOS: There are also various other options you can use, which are on a pop-up menu that you can get at either by either right clicking or control clicking.

The Sources text boxes are all editable, which means you can move words around by cutting and pasting, or even edit or manually add in new words.

Tip 1: The more interesting the source material you use, the more interesting the cut-up suggestions generated. You can think of your favourite source materials like spices or ingredients, and then build up a collection of your favourites to add when you want. You can then add as little or as much as you like!

Tip 2: Why not try using text from some current news item as a Source - that way you can make sure you use words that are current, and some interesting themes can seemingly come out of nothing!

Tip 3: The more words there are in your Sources the more options for cut-ups.

Tip 4: To use the "Sources Only" cut-up mode requires that you have at least one source defined.

| UI Item | Description / Menu Items |

Action Action |

Popup Action menu.

|

Delete Delete |

Clear out this particular source. |

Text Area Text Area |

This text area displays your source. It supports standard iOS text copy/paste etc controls. There is no formatting of text in a source. Wotja for macOS: In Wotja for macOS you can access a pop-up context sensitive menu by right clicking/control clicking on one of the text fields in the Sources area, or a word in this area. Right/Ctrl click on a word NB: You can click on a word, or, specifically in respect of the search option, select (highlight) single or multiple words.

Click on the background

|

Page Control Page Control |

Either tap a dot or swipe the screen left or right to move between sources. |

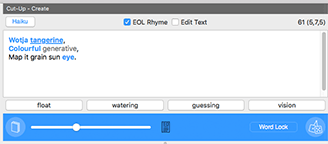

Cut-Up Mode: Create

The Create screen is the second of the three Cut-Up related screens.

It is where Where you create your cut-ups. The created cut-up, locks and associated Rules are saved in your Wotja file.

Tapping a word will lock/unlock it (a locked word is shown in red and will not be changed when you press the Create Cut-up button).

| UI Item | Description / Menu Items |

Action Action |

Popup Action menu.

|

Save Save |

Saves this cut-up to Saved. If this is the first one you save you see no pop-up. If you already have a saved cut-up in Saved, you see a pop-up with two options:

|

Edit Edit |

Select to be able to edit the text in the cut-up area as plain text e.g. perhaps to change a word or even add new words (in which case a suitable new matching Rule will be generated). When in Edit mode, click in a word to place the cursor and press the Esc key to see a drop list of words; or double click a word to select it (once selected if you wait a second and click/hold the word again you can drag it). Press "Done" when finished to revert back to standard cut-up screen behaviour where tapping a word will lock/unlock it. |

Rules Rules Rules Rules |

This top left button shows you the rule being used to create your cut-ups. If the rule has a title you will see it here, otherwise you will see the rule syntax. Tap on the Rules button to go to the Rules List where you can select a rule. Tap on the Rules button to go to the Rules List where you can select a rule. |

EOL (End of Line) toggle EOL (End of Line) toggle |

Setting determines whether Wotja attempts to rhyme the ends of lines with other lines. For true, random, cut-up, then you would leave this set to EOL - OFF. |

Character Count Character Count |

Displays the number of characters in your cut-up (useful to know if you plan on tweeting your cut-up) and number of syllables in each line. |

Text Area Text Area |

Lines are alternatedly coloured with black and dark grey so that if your text wraps you can easily see which line the words are in. Tap a word to lock or unlock it (it shows in red when locked). When you select a word (locked or unlocked) it also underlines it to show that it is the selected word (e.g. "inspiring" in the screenshot above). To select a word without changing its lock state tap on it and then drag away from it and release. Even though the word does not display an underline, it is now selected. |

Word Alternatives Bar Word Alternatives Bar |

This is displayed just above the bottom toolbar. If you tap on one of the four alternative words (e.g. "creative" in the screenshot above) then that word will replace the selected word (e.g. "inspiring" in the screenshot above). To refresh the alternative words shown, either tap a blank part of the cut-up area or tap the Create Cut-up button. |

Library Button Library Button |

Located to the left of the Blend slider, tap this to view the User Word Library which where you can add your own favourite words ("spices") that you can mix with words from your sources. In the User Library click the Show button to view the Wotja Word Bank (containing over 650 selected words). Both are editable/customisable and operate as standard text fields. The User Word Library and Wotja Word Bank are both stored outside of the Wotja file, so these words are available to use in cut-ups for any file. |

Blend Slider Blend Slider |

The position of the blend slider determines what proportion of words are selected from your Sources or from the User Library and/or Wotja Word Bank. Left means use words only from Sources, and right means use words only from your Word Library. Any where in between is a blend! |

Cut-Up Mode Selector Cut-Up Mode Selector |

Tap the Mode Selector to choose how your cut-up is created. Word Lock: In this classic mode, words can be locked, and the non-locked words are changed using the active rule (including syllables), and are sourced from Sources text, User Library and any Word Bank data you might have defined. This mode is very useful for fine-tuning a cut-up. Sources Only: This new cut-up mode analyses the structure of the Sources text and lets you create cut-up with a more natural feel; in this mode the cut-up is created from Sources ONLY and it uses the active rule (ignoring syllables) but EOL rhyming and word locks are ignored. |

Create Cut-up Button Create Cut-up Button |

This button looks like a dice and is located on the right of the tools panel. Provided you have first added some words to at least one of the Sources text boxes above (or you have some words in your User Library or the Wotja Word Bank toggle is on AND the Library slider is not hard over to the right) when you click this you'll see a Cut-up appear in the text area above it. Each time you click this button all non-locked words will be replaced by words selected at random from your word pool and/or Library/Word Bank. |

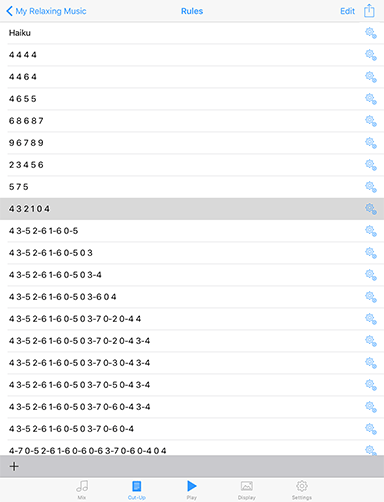

Rules List |

|

In the Rules List you will see the available Rules and can select the rule you want to have used for your cut-up. If there isn't one there you want to use then you can easily create and use any rule you want - see the Rule Editor. Tip: Rules are stored in a Wotja file. When you create a new cut-up then the Rules List is populated with the default rules. The cut-up uses only the rule you select and you can delete any of these rules you don't want to be used for cut-up. Edit:

Actions:

Rule List item:

|

|

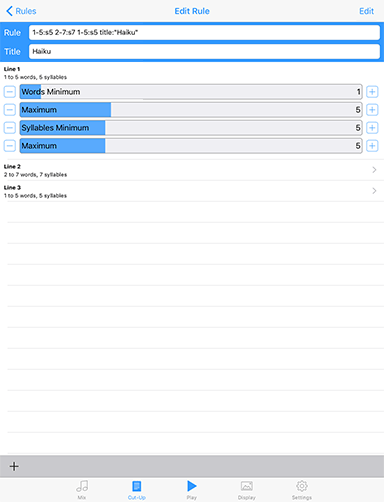

Rule Editor |

|

See: "Free Mode" & Limitations The Rule Editor provides an easy, graphical way to create rules. If there isn't a rule you want to use in the Rules List, or you want to edit it, then you can do that easily. There are 3 ways to edit or create Rules. Either:

Editor Controls:

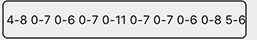

Rule SyntaxThe most basic Rule consists of a series of numbers. Each number stands for the number of words to put in a particular line of the cut-up. For example, the numbers "3 4 5 6" would represent four lines of cut-up as there are four numbers. The first line would have three words, the second would have four words, the third would have five words, and the fourth would have six words. There is a lot more you can do if you want to, and it then helps to understand the Rule syntax. Line Rule SyntaxTip: Items in [ ] are optional. LineWordsMin [- LineWordsMax[:SyllablesMin [-SyllablesMax]]] Composite SyntaxLineRule1 LineRule2 LineRule1 [title:"Name"] Using the above syntax it is easy to create a Haiku rule with 3 lines of words within a range and and fixed number of syllables of 5,7,5 for those lines. e.g.: 1-5:s5 2-7:s7 1-5:s5 title:"Haiku" |

|

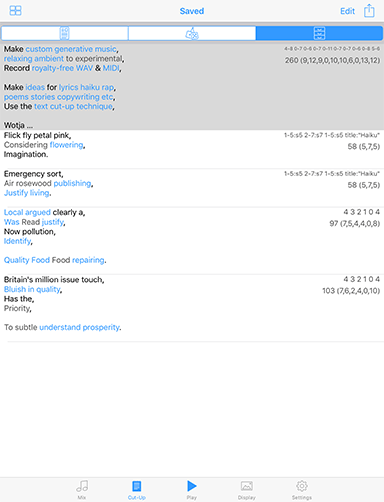

Cut-Up Mode: Saved

The Saved section is the third of the three Cut-Up related screens / sections.

It is where you can see all the cut-ups you have chosen to save. These cut-ups are saved in your Wotja file.

| UI Item | Description / Menu Items |

Action Action |

Popup Action menu.

|

Edit Edit |

Popup menu.

|

Saved Cut-up Saved Cut-up |

Tap a saved Cut-Up to select it. Export Saved Cut-Up: Right click a saved Cut-Up for a pop-up menu with options to copy it to the Clipboard or share it via standard Apple services. |

Add Add  Replace Replace  Up/Down Up/Down  Edit Edit  Delete Delete  Actions Actions |

Add: Copy the current Cut-Up to the saved Cut-Ups. Replace: Replace the selected saved Cut-Up with the current Cut-Up. Up/Down: Use these buttons to change the order of saved Cut-Ups. Edit: Copy the selected saved Cut-Up to the Cut-up screen for editing. Delete: Delete the selected saved Cut-Up. Export All: Tap the right hand Actions button for a pop-up menu with options to copy all saved Cut-Ups to the Clipboard or share them via standard Apple services. |

Cut-up Cut-up |

Edit saved cut-up: Tap on a saved cut-up and in the pop up message select OK to copy it to the Cut-up screen for editing. Delete saved cut-up: Swipe left on a cut-up and select Delete. Export one cut-up: Swipe left on a cut-up and select Export. |

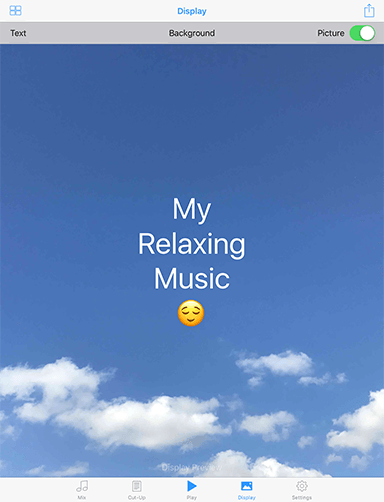

Display Mode

See the Video: Wotja iOS: Playlists (8m)

The Display Tab (also referred to as Display Screen) is where you access the screens that let you set up how your wotja looks, i.e. for Fullscreen display when someone loads it.

If you want to add text to your wotja you can do that in the TTM view in the Design screen.

| UI Item | Description / Menu Items |

Files Files |

Back to Files screen. For details on the Save Changes popup displayed when you press this button see the description of this button in the UI Elements section. |

Action Action |

Popup Action menu. For details see the description of this button in the UI Elements section. |

Text Text |

Displays a pop-up menu where you can select:

|

Background Background |

Go to a screen where you can set the background colour via RGB sliders or a HSB colour wheel. |

Picture Picture |

iOS: Goes to the Photos app screen where you can select a picture to use as a background image to your wotja. Turn off the toggle to remove the image from the wotja when you next save it. macOS: Select an image using Finder. Tip: If you want to export an imageless (i.e. small) wotja to clipboard for embedding in a webpage, then from the Action menu select "Export Wotja URL to Clipboard (no image)". |

Full Screen Full Screen(click image for large) |

iOS Only: Tap anywhere in the center area to go Full Screen mode; when in Full Screen mode, tap anywhere to return to the Display screen. In Full Screen mode the background picture, if attached, will slowly auto-pan around the screen. |

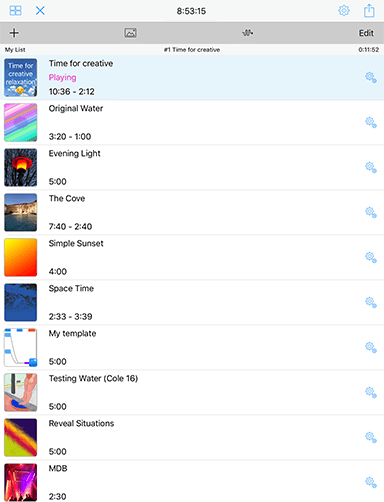

Player: List

See: "Free Mode" & Limitations

See the Video: Wotja iOS: Playlists (8m)

The List Player is used to:

- Play Wotja List and user exported Wotja Box files

- Create Wotja List files

- iOS: Files > Create New > Play List

- macOS: Wotja Menu > Files > New > Playlist

- Playlists can reference/include up to 20 files

- Export user created Wotja Box files from Wotja List files

- Add Wotja and Noatikl files to a playlist

- Re-order the files in a playlist

- Edit List Item Override settings for both Wotja List and Wotja Box files (these values are saved to the .wotjalist or .wotjabox file respectively)

It is opened by:

- Creating a new Wotja List file (.wotjalist)

- Opening a Wotja List file (.wotjalist)

- Opening a user exported Wotja Box file (.wotjabox)

List File Types

- Wotja List (.wotjalist):

- Playlists that contain references to Wotja / Noatikl / Mixtikl files saved to your iCloud folder. If you change the file in Wotja, then the playlist just references that changed file.

- Wotja Box (.wotjabox):

- Container files that can be exported from a playlist. They include all parameter settings, text and images for the included Wotja / Noatikl / Mixtikl mixes/pieces - which makes them great for sharing your favourite creations. They do not include additional referenced media such as loops, however. These additional files, if required for your mix, need first to be placed in your iCloud Folder (Wotja iOS) or in the Intermorphic Folder (Wotja Mac)

| UI Item | Description |

Sleep Time Sleep Time |

Shows the Wotja Sleep Time (i.e. how much longer Wotja can play for before it will stop playing). This value is also limited by the maximum play time allowed in your IAP tier. Tap the right hand Settings button to go to the Settings Screen where you can set the Sleep Time. |

Action Action |

|

Playlist Name Playlist Name |

The name of the Playlist (Album). |

File Name File Name |

The name of the currently selected File. |

File Play Time File Play Time |

Shows the Play Time left for the current file. This value can be adjust in List Overrides. This value is also limited by the maximum play time allowed in your IAP tier. |

Add Item Add Item |

Tap to add a file to your playlist. |

Album Thumbnail Album Thumbnail |

Tap to add a thumbnail to your playlist. This image will show as the thumbnail in the Files screen. |

Sequential Play Sequential Play Random Play Random Play |

Tap to toggle between sequential and random play. |

Edit Edit |

Tap to be able to reorder your playlist. |

|

Tap the thumbnail to go to Full Screen display (see below). |

|

The top line is the file name. The center line displays "Playing" when the file is playing. The bottom line displays the minimum and maximum piece time (these values are set in Item Overrides). Tap the central area to play or stop the file. When the file is playing the item will be a light blue colour. |

|

Tap to go to the Item Overrides screen. |

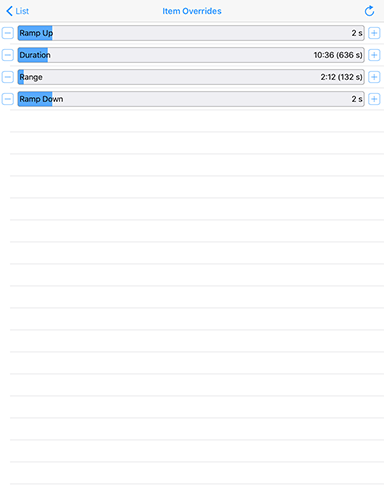

Item Overrides Screen Item Overrides Screen(click image for large) |

These settings override any related settings that may be in the File (these File related Playlist settings are set in the Cell Rules panel in the Mix Tab.

|

Full Screen Full Screen(click image for large) |

Full Screen play is for when you want to sit back, look at any included image/message and reflect on the music. Tap the center of the screen to return to the Play List screen. |

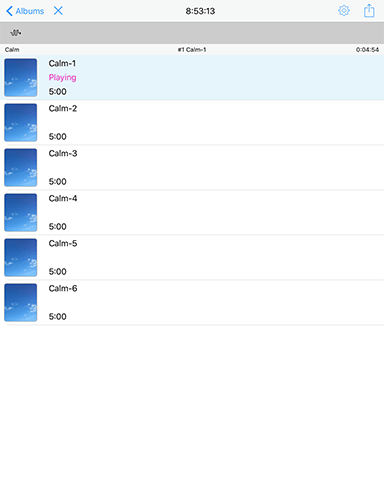

Player: Built-In Album

See: "Free Mode" & Limitations

See the Video: Wotja iOS: Playlists (8m)

The "Album" Player is used only to play Wotja's "Built-In" albums. It is a slightly less functional version of the List Player in that it currently does not let you adjust list "Item Overrides" or re-order the album.

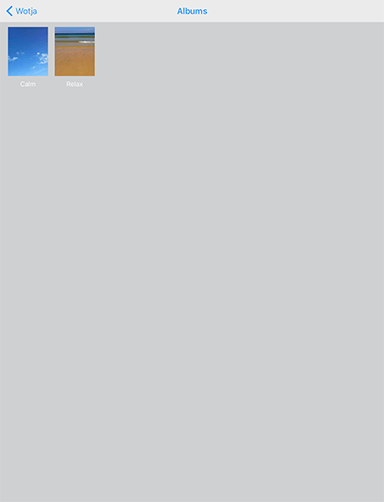

Built-In Albums

Wotja currently includes two built-in albums:

- "Calm"

- "Relax"

If you wish to adjust list "Item Overrides" or re-order the album first Export the Album as a Playlist via the Action button "Save Wotja Box Album to File". Then open the exported Playist from the Files screen and change the settings there.

Access:

- iOS: Files > Album (icon; menu bar, top left)

- macOS: Wotja > Window > Wotja Player > Built-In Albums

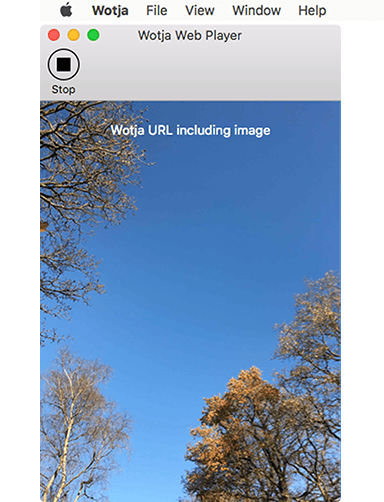

Player: Web (macOS)

See: "Free Mode" & Limitations

The Wotja "Web" Player is for Safari for macOS only and lets you:

- Play Wotja Files (.wotja, .wotjalist and .wotjabox) that have been embedded in webpages as Wotja URLs when that webpage is visited in Safari (see below).

- See here for details on how to embed one and "wotjafy" a webpage.

Access:

- macOS: Wotja > Window > Wotja Player > Show Window

How to use the Wotja Web Player to play webpage embedded Wotja URLS in Safari:

- Get Wotja from the Mac App Store and install it (it is free!).

- From the Safari menu select Safari > Preferences > Extensions.

- Check the tickbox for "Wotja" or e.g. "Wotja 2017" (annual version, if installed) which will also then show a "Wotja" button in the Safari toolbar.

- Tap that button to bring the Wotja Player window to the foreground.

- Return to a webpage that has an embedded Wotja URL and you should hear it play!

- Remember to press the Wotja Player Play button (or button in popup) to restart play once it has stopped.

| Item | Description |

| Mac Menu | The Web Player menu includes the following top level menu items:

|

Play/Stop Button Play/Stop Button |

Press to Stop/Start playback. Pressing Play also resets the Play timer so you can play for another 3 minutes. |

Display Area Display Area |

Any TTM text included in the Wotja File and that is set to Display will display at the top of this areas. The area takes a background colour the same as that in the Wotja file and any image included in the Wotja file displays in the center. |

Player: Apple Watch

The secret of Wotja for Apple Watch is that it is very simple. It is primarily a player, but it does also let you randomise a mix. In a paired iOS device you can you :

- Stop / start a mix loaded in Wotja for iOS, or;

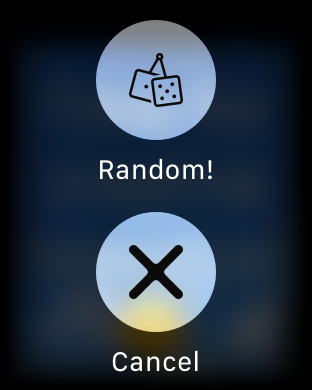

- Easily create and play a new random mix using a 3D Touch menu.

Random mixes can be great if you just want to hear something new. Perhaps you are on a walk or a run, or just sitting down for some quiet contemplation.

What displays in the background on the Apple Watch is simply the Wotja mix file thumbnail!

| Item | Description |

Main UI Main UI |

All you need to do is tap!

|

3D Touch Menu 3D Touch Menu |

To display this menu 3D Touch the Wotja for Apple Watch app.

|

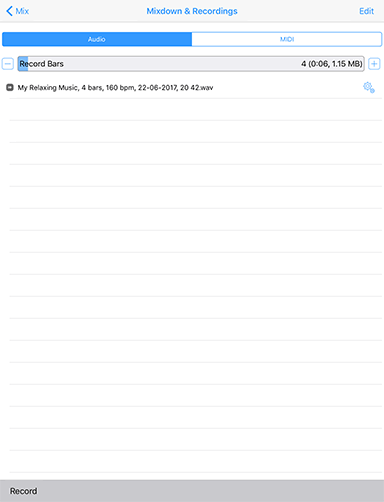

Mixdown & Recordings

See: "Free Mode" & Limitations

The Mixdown & Recordings screen is where you can create and preview Audio or MIDI mixdown recordings.

This screen is only accessed from the "Mixdown & Recordings" Action menu item in Mix Mode:

- Mix Mode > Action (icon)

You can set the number of bars you want to record (up to 100 bars by default, but extendable via the Max Bars slider control in the mix segment of Settings) and you can make either 48KHz stereo WAV or MIDI mixdown recordings. To kick off a mixdown recording tap the "Record" button in the bottom toolbar.

For audio recordings you can set the Ramp in and Ramp down times (from 0 - 20 seconds) in the Mix section of the Cell & Mix Rules panel.

Recordings you make are saved to the Wotja iCloud folder or Wotja folder depending on the "Use iCloud" setting (iOS > Settings > Wotja > Use iCloud).

Note: Mixdown recordings are not live recordings and they are made as fast as your device allows. To be made they require sufficient device memory to be available.

Notes:

When a recording is made it is held in memory before iOS flushes it to the file system.

Bytes per second = 48000 x 2 x 2 =192,000. 100 bars of 4:4 at 60 bpm is 400 seconds of recording (just over 6 minutes). In terms of bytes this is 192000 * 400 = 77 MB. At 30 bpm, that is 154 MB.

For every 100 MB recording, you need to have space in the file system. 10 recordings will be 1 GB.

If you're using iCloud, e.g. 10 files totalling 1 GB, remember that those files are synced with the cloud.

| UI Item | Description |

Mix button Mix button |

Tap to go to back to the Mix Mode screen. |

Title Title |

When a recording is being made this will change to indicate the progress of the mixdown. |

Edit button Edit button |

Tap on this to access usual controls that allow you to manage deletions. |

Export Export |

Tap to present a pop-up action sheet where you can choose where to export your recording to e.g. to iCloud Drive, Dropbox etc. |

Audio/MIDI selector Audio/MIDI selector |

Tap to select which file type you want to record do. The two supported mixdown recording formats are:

|

Bars selector Bars selector |

To change the bars value either tap the "-" or "+" buttons, drag your finger along the slider or double tap it for a pop up to manually enter a value. You can increase the default value in Settings > Mix. |

Recording Recording Audio file Audio file MIDI file MIDI file |

The recording item uses an icon to indicate the file type and the description shows the name, bars, tempo and date/time. |

Record button Record button |

Tap this to start a recording. Once a recording is being made it changes to "Stop". You can press that to stop the recording being made. As the recording is being made you will see the recording progress is displayed at the top of the screen instead of the screen title. |

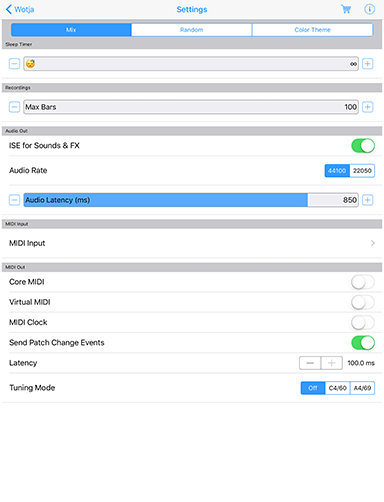

Settings: Mix

The Mix "segment" in the Settings screen is where you configure how you want Wotja to play its sounds and, amongst other things, how it is used with Audiobus / Inter-app Audio [both iOS only] and MIDI Out/In.

This screen is accessible in the following places in Wotja:

macOS:

- Wotja Menu > Window > Wotja Settings > Mix

- Mix Mode > Randomize button (dice icon) > Randomization Settings > Mix

iOS:

- Files > Settings (icon) > Mix

- All screens > Settings tab > Mix

| Item | Description |

| Information | Select for links to further information such as version number, links to this user guide etc. Also shown are links to where to find more Paks and more Wotjas. It also includes a link to a simple in-app Game [iOS only]:

|

Sleep Timer |

|

| Timer Slider | (Default: Infinite) The sleep timer can take values 1m to 60 mins. Use it to set how long you want to Wotja to play before fading out over the last 30 seconds of play time. How long Wotja plays for before before play stops depends on your IAP level. See Wotja Store. The timer is off when the slider is in the near left position. You will then see the value shown as ∞. |

Recordings |

|

| Max Bars Slider | (Default: 100 Bars) This settings lets you increase the number of bars you can use to make mixdown recordings (see Mixdown Recording). IMPORTANT: Use with care as recordings are large and your device must have sufficient free memory available. If you try to change this value take note of the pop up message warning before you change it. Also be aware that these the recordings are stored in iCloud (if you are using that), so that could hit your bandwidth. |

Audio Out |

|

| ISE for Sounds & FX | (Default: On) Enables ISE sounds, which all voices use as this is the sound generator for Wotaj. Turn this to off when you want to play through an external synth(s) and you do not want to hear the ISE sounds as well. NOTE: When this is set to OFF you will not hear anything from Wotja unless you have a MIDI device connected (and you will hear sounds through that instead). Note: Inter-App Audio and Audiobus are on by default (from Wotja 4.7 onwards it also supports Audiobus 3 audio routing). To use either of these you need to route Wotja via an Inter-App Audio or AudioBus enabled app. |

| Audio Rate [iOS only] | (Default: 48 kHz or 44 kHz; auto selected depending on device capabilities). If, for any reason, you find Wotja is struggling to play your mix without a bit of breakup, try selecting the alternative 24 / 22 kHz option which uses less processor cycles. |

| Audio Latency [iOS only] | (Default: 850 ms). This setting is of particular use if you are using Wotja routed via Audiobus or Inter-App Audio - higher values minimise audio artifacts which also depend on device power and mix/sound/fx complexity. If you are using Wotja to drive external MIDI synths you may need to tweak the values both here and for MIDI Latency. Example: You're driving an external synth via MIDI and want to sync the audio output of that with audio output from Wotja (ISE). First, make the Wotja audio latency as low as you are happy with. Then, change the MIDI latency (below) until the output from 3rd party synth is in sync. Many dependencies are outside control of Wotja, however. |

MIDI Input |

|

| MIDI Input button | Note: MIDI Input is only used by Listening Voices. Tap the MIDI Input button to go to the MIDI Input Devices screen where you will see toggles for all MIDI Input Devices detected by Wotja.

Enable MIDI Input Toggle Shown at the bottom of the screen, don't forget to turn this on (it is on by default) if you want Wotja to detect incoming MIDI! |

MIDI Out |

|

| Core MIDI | (Default: Off) When enabled, tells Wotja to send-out MIDI events via Core MIDI. Apps that are Core MIDI enabled can take advantage of this. |

| Virtual MIDI | (Default: Off) When enabled, causes Wotja to present a number of Virtual MIDI ports - one Omni (all channels) and 16 per-channel virtual MIDI ports - and Wotja sends MIDI events as Virtual MIDI ports over Core MIDI. |

| MIDI Clock | (Default: Off) When enabled, tells Wotja to send-out MIDI Clock events over Core MIDI / Omni channel.

|

| Send Patch Change Events | (Default: On) When enabled, tells Wotja to send-out MIDI Patch Change Events. Turn this off if you want ONLY MIDI Note Event information to be sent. |

| Latency | (Default: 100ms) Sets Latency (milliseconds) to apply to MIDI events sent-out to Core MIDI / Virtual MIDI by Wotja. Use to help remove jitter if using e.g. Network MIDI. |

| Tuning [iOS only] | (Default: Off) Useful option for tuning external analog synths - causes all notes sent-out by Wotja to be "forced" to the specified pitch. |

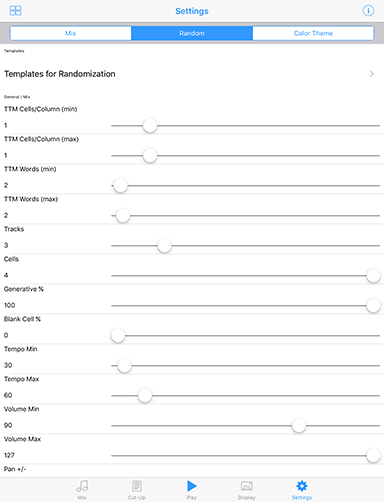

Settings: Randomization

See the Video: Wotja iOS: Mix Randomization Settings (12m)

The Randomization "segment" in the Settings screen is where you select the Randomization values that determine both how mix Cells are populated with templates when using the Random function in Wotja as well as how TTM voice text randomisation is applied in the Text Editor screen.

This screen is accessible as follows in Wotja:

macOS:

- Wotja Menu > Window > Wotja Settings > Random

- Mix Mode > Randomize button (dice icon) > Randomization Settings > Random

iOS:

- Settings > Random

- Files > Create New > Wotja Mix (manual) Mix > Random Settings (icon) > Random

- Mix Mode > Randomize button (dice icon) > Randomization Settings > Random

The Random / Randomize function is mannually available in two places in Wotja:

| Parameter | Description |

Templates |

|

| Templates for Randomization | Templates screen: This easy access to the templates screen means you can always easily change the pool of templates you want to randomize from. |

General / Mix |

|

| TTM Cells/Column (min) | Default - 1: The minimum number of TTM Players that will be in a column, assuming you have a TTM Pak available for selection when randomizing. |

| TTM Cells/Column (max) | Default - 1: The maximum number of TTM Players that will be in a column, assuming you have a TTM Pak available for selection when randomizing. |

| TTM Words (min) | Default - 2: The minimum number of words that will be added to a TTM Text field on creating a new random mix or in the TTM Text Editor when using the Randomize button with Randomise option selected. |

| TTM Words (max) | Default - 2: The maximum number of words that will be added to a TTM Text field on creating a new random mix or in the TTM Text Editor when using the Randomize button with Randomise option selected. |

| Tracks | Default - 4: The number of Tracks that will have their cell contents (below) randomized (starting with Track 1). |

| Cells | Default - 4: The number of Cells in the Tracks above that will have their contents repopulated from the list of available templates. |

| Generative % | Default: 70% - The chance that a randomized will be repopulated with a generative template. |

| Blank Cell % | Default - 25%: - The chance that a randomized will be empty. |

| Tempo Min | Default - 30 bpm: - The minimum tempo of a new, random mix. |

| Tempo Max | Default - 60 bpm: - The maximum tempo of a new, random mix. |

| Volume Min | Default: 90 - The minimum volume of a track in a new, random mix. |

| Volume Max | Default - 127: - The maximum volume of a track in a new, random mix. |

| Pan +/- | Default - 30: - How far away from center that pan can be for a Track in a new, random mix. |

Cells |

|

| Generative Bar Min | Default: 8 - The minimum base value for the number of bars a generative template will play for and to which the range value (below) is added. Special Value: If this value is set far left to ∞ then once play in a Track reaches such a cell the generative content in that cell will continue to play either for as long as the mix plays or until you manually select another cell in that Track to play. |